ChatGPT is impressive. It can write, code, and answer complex questions in seconds; yet it can also produce completely wrong answers with total confidence. And that’s exactly the problem: most companies believe they are using a form of intelligence that understands, analyzes, and reasons like a human.

In reality, that’s not what’s happening.

Today, more and more companies are integrating tools like ChatGPT into their workflows. But behind the excitement, there’s a fundamental misunderstanding: many assume they are interacting with a system that truly “understands” what it says.

In reality, a language model (LLM) like ChatGPT, Claude or Gemini, has no consciousness and no human-like understanding of the world. Its core function is much simpler: it predicts the most likely next piece of text based on context. Like a human brain anticipating the end of a sentence, the AI calculates probabilities, but at a massive scale, trained on vast amounts of text.

To do this, language is broken down into “tokens,” then converted into numerical data. A token can be a word, part of a word, or even punctuation. For example, “marketing” is often one token, while “extraordinary” may be split into several. A simple sentence like “Hello, how are you?” may contain multiple tokens depending on how it is processed. These tokens are then transformed into mathematical representations, allowing the model to work with relationships between concepts.

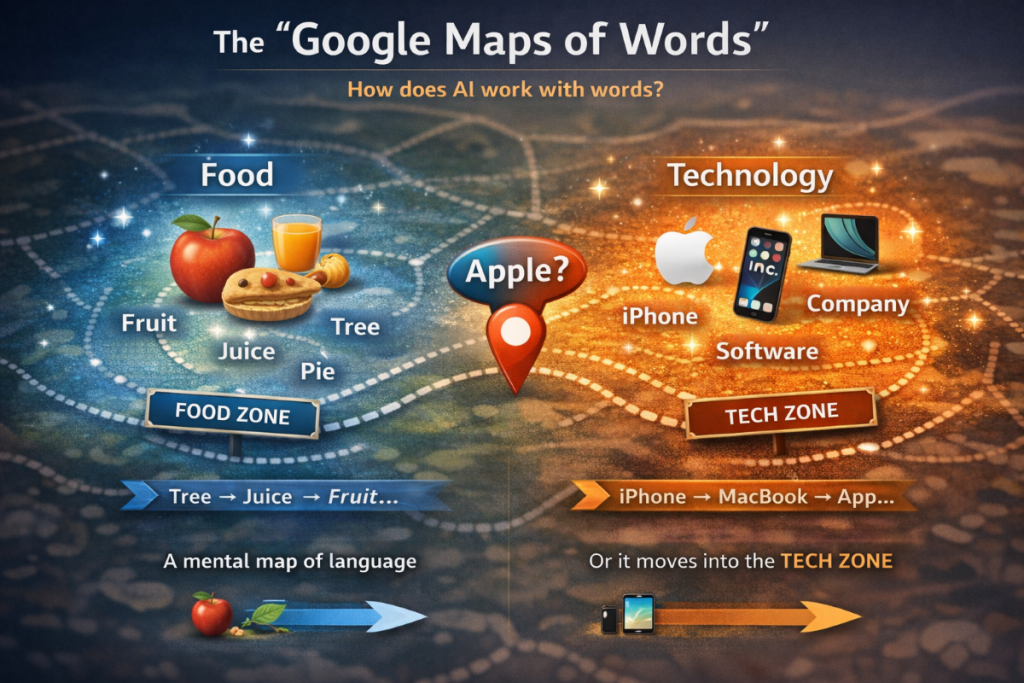

In this space, words that frequently appear together become close to each other. This means the AI doesn’t learn definitions. It learns patterns of usage.

Let’s take a first semantic cluster: food-related words.

apple = 1 token

fruit = 1 token

juice = 1 token

pie = 1 token

tree = 1 token

These words often appear together, so their representations become close in the model’s internal space.

Now, a completely different cluster: technology.

Apple = 1 token

iPhone = 1–2 tokens

MacBook = 1–2 tokens

software = 1 token

company = 1 token

Again, these words are strongly associated and form another cluster. And here’s the key point: the word “apple” belongs to both worlds.

In a food context: “apple” is close to “fruit,” “juice,” “pie”

In a technology context: “Apple” is close to “iPhone,” “MacBook,” “software”

So for the AI, “apple” doesn’t have a single fixed meaning. Its “position” changes depending on the context. You can imagine this as a map:

one cluster for food, one cluster for technology, and “apple” moving between them depending on the sentence.

For example:

“I eat an apple every day” activates the food cluster

“Apple released a new iPhone” activates the tech cluster

Same word, completely different position in the model’s internal space.

Another interesting aspect is that relationships can be manipulated. For example:

“king” – “man” + “woman” = “queen”

This is not true understanding, it’s a mathematical relationship learned from patterns in data.

The AI learns structures, relationships, and patterns in language. It doesn’t just repeat, it generalizes. This allows it to generate coherent, context-aware responses that can often feel insightful. And that’s exactly what creates the illusion of intelligence. Human language carries reasoning, emotion, and logic, and the AI reproduces these patterns. It can sound like it understands, argues, or even empathizes, while operating in a fundamentally different way from humans.

This illusion has real consequences in business. Many companies use AI as if it “knows.” They ask things like: “Create a marketing strategy for my business” or “Write a complete SEO article.” The result is often clean and structured, but generic, interchangeable, and rarely differentiated. In some cases, decisions are even made based on unverified outputs, simply because they sound convincing.

The issue is not that AI makes mistakes. The issue is that it can be wrong while sounding completely right. It doesn’t aim for truth. It aims for plausibility. It generates what is statistically most likely to “sound correct”. This naturally pushes its outputs toward the average, toward consensus. But the majority is not always right—and rarely exceptional. This is why poorly guided AI usage often leads to standardized thinking and content. At scale, the risk is clear: automating mediocrity.

On the other hand, companies that truly benefit from AI use it differently. They don’t treat it as a replacement for thinking, but as an amplifier. They provide direction, context, and hypotheses. Instead of asking for a full strategy, they say: “Here’s our positioning and target, challenge this and suggest improvements.” Instead of delegating everything, they use AI to structure, refine, and enhance their own ideas.

In this context, AI becomes extremely powerful. It accelerates execution, explores variations, tests ideas, and optimizes outputs. But it remains dependent on human intelligence to guide it. Without direction, it reproduces what already exists. With strong intent, it becomes a performance multiplier.

It is therefore critical not to delegate certain functions:

Critical thinking remains essential, as AI can produce plausible but incorrect information.

Human creativity remains central, as it comes from experience, contradictions, and intuition, while AI primarily recombines existing patterns.

Finally, curiosity and the ability to challenge dominant ideas must be preserved, as AI naturally converges toward what is already established.

In conclusion, the limitations of LLM are not bugs, they are direct consequences of how they work. These tools are extraordinarily powerful, but only if we understand what they truly are. The companies that will succeed with AI are not those that use it the most, but those that use it intelligently, as a complement to human intelligence, not a replacement.

LG. NAGA PUTIH KONSULTING